Large Language Models (LLMs) architecture in London refers to the structured design of advanced AI systems capable of understanding and generating human-like text. Built on transformer-based models, LLMs power applications in technology, education, and business, processing massive datasets efficiently. London’s AI ecosystem leverages these architectures to enhance communication, automate workflows, and innovate across multiple sectors.

How Does Large Language Models Architecture Function?

LLMs operate through deep neural networks comprising multiple transformer layers. Input text is tokenized, embedded into dense vectors, and processed through self-attention mechanisms that capture context and relationships across words. Feedforward layers transform these representations, and decoding layers generate coherent, context-aware output. This architecture enables tasks such as translation, summarization, and content creation with high accuracy.

What Are the Core Components of Large Language Models Architecture?

The main components of LLMs include:

-

Tokenization Layer: Converts text into discrete tokens for processing.

-

Embedding Layer: Translates tokens into dense vector representations.

-

Self-Attention Layers: Capture contextual relationships across tokens.

-

Feedforward Neural Layers: Transform and refine information.

-

Decoding Layer: Generates text or predictions based on learned patterns.

These components work together to process vast linguistic data and produce outputs that mimic human language understanding.

Which Technologies Support Large Language Model Architectures?

LLMs rely on transformer architectures executed on high-performance GPUs or TPUs. Frameworks such as TensorFlow and PyTorch facilitate model development, while cloud platforms like AWS SageMaker and Google Cloud AI enable scalable training. Techniques such as model pruning, quantization, and data parallelism optimize computational efficiency and resource usage, making deployment feasible for London’s demanding AI applications.

Why Is Large Language Model Architecture Important?

LLM architecture underpins AI systems capable of comprehending and generating natural language with contextual accuracy. In London, it enables innovations in customer service, content creation, education, and business intelligence. By automating language-driven tasks and providing intelligent insights, LLMs offer organizations competitive advantages while enhancing efficiency and accessibility of information.

Who Develops and Uses Large Language Models in London?

London hosts AI research labs, startups, and tech companies developing and deploying LLMs. Users span enterprises, educational institutions, government agencies, healthcare providers, and creative industries. These models enhance communication, automate content generation, and provide data-driven insights tailored to regional linguistic and business contexts.

When Were Large Language Model Architectures Established and How Have They Evolved?

Transformer-based LLMs rose to prominence after 2017. Since then, architectures have grown in scale, efficiency, and capability, incorporating innovations like sparse attention and multi-modal inputs. London’s AI community actively adopts these advancements, integrating ethical AI practices and regulatory compliance while exploring novel applications for business and research.

Where Is Large Language Model Architecture Applied in London?

LLMs are applied across natural language understanding, machine translation, chatbots, legal document analysis, and educational tools. Businesses use these models to automate customer support, provide content recommendations, and perform analytics. London’s finance, public services, and creative sectors rely on LLMs to enhance workflow efficiency and enable intelligent decision-making.

Does Large Language Model Architecture Present Challenges?

Yes, challenges include high computational costs, data biases, interpretability, and ethical concerns. London’s AI developers address these through responsible AI frameworks, transparency initiatives, and bias mitigation strategies. Optimizing models for energy efficiency and fairness ensures sustainable, trustworthy AI deployment.

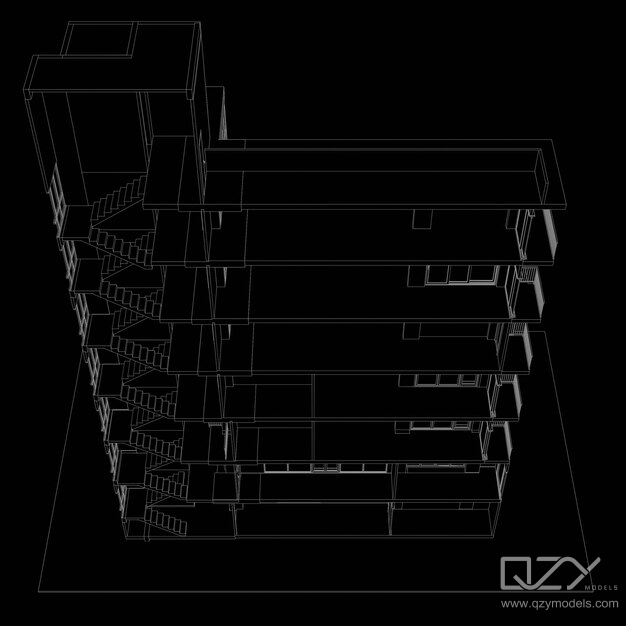

Can QZY Models Leverage Large Language Model Architecture?

QZY Models can integrate LLMs to enhance architectural client services by automating textual content generation, improving project documentation, and creating AI-driven interactive presentations. Combining tactile precision of physical models with digital intelligence allows QZY Models to elevate client communication and operational efficiency in London’s competitive architecture market.

QZY Models Expert Views

“Large Language Model architecture represents a leap in AI’s ability to understand and generate human language. At QZY Models, we see immense potential in coupling LLM-driven insights with our physical architectural models. This integration enhances client engagement, streamlines workflow, and bridges the gap between tangible design and intelligent digital content. In London’s innovative landscape, LLMs empower creativity, efficiency, and informed decision-making.” — Richie Ren, Founder of QZY Models

Conclusion: Key Takeaways and Actionable Advice

Large Language Model architecture is critical for modern AI, leveraging transformers and scalable computation to process and generate complex language. London-based organizations benefit from applications in communication, analytics, and automation, while addressing ethical and operational challenges. Firms like QZY Models can harness LLM capabilities to complement physical model services, creating a synergy of digital intelligence and tactile expertise for superior client experiences.

Frequently Asked Questions

What is the core technology behind large language models?

Transformers with self-attention mechanisms form the foundation, enabling LLMs to process contextual information effectively.

How do large language models handle context in text?

They use multi-head self-attention layers to capture dependencies between words across long sequences.

What infrastructure is needed to train LLMs?

High-performance GPUs or TPUs and cloud platforms support scalable, efficient training and deployment.

Are there ethical concerns with large language models?

Yes, including biases, privacy, and interpretability, which are mitigated through responsible AI practices and transparency.

How can QZY Models benefit from LLM technology?

By integrating AI-driven text generation, client interaction automation, and enhanced architectural presentations.