Perplexity AI model architecture in Stockholm is an advanced, multi-model hybrid system designed to provide fast, accurate, and contextually relevant responses. By integrating natural language processing, model routing, and real-time data retrieval, this system enables efficient query handling at scale. Perplexity AI delivers answers with transparency, allowing users to trust the sourced information it provides.

What are the core components of Perplexity AI model architecture?

Perplexity AI’s architecture is composed of several crucial components:

-

User Interface (UI): Facilitates both text and voice query inputs.

-

Query Processing Module: Interprets user intent using transformer-based natural language processing (NLP).

-

Hybrid Retrieval Engine: Combines vector search and keyword-based search to retrieve relevant documents.

-

Neural Re-ranking Model: Re-ranks the documents based on relevance and context.

-

Multi-Model Orchestration Layer: Routes queries to specialized AI models like Sonar, Claude, GPT-4o, and Mixtral.

-

Machine Learning Engine: Continuously optimizes performance based on user interactions.

This layered architecture ensures high-speed, accurate responses while maintaining scalability for varied query types.

How does Perplexity AI handle query processing and retrieval?

When a user submits a query, the system first uses transformer-based NLP to understand the context and intent of the query. It then utilizes a hybrid retrieval engine, combining vector embeddings and traditional keyword search, to gather relevant documents. These results are then re-ranked by a neural cross-encoder model to select the most relevant documents, which are synthesized into a coherent answer using generative AI models.

Which AI models are integrated within the Perplexity AI architecture?

Perplexity AI employs a range of models to provide the most accurate answers. These include:

-

Sonar Series: In-house models fine-tuned for efficient factual question-answering.

-

Claude Series: Used for deep reasoning and analytical tasks.

-

GPT-4o: A large-scale generalist model that handles creative synthesis and complex tasks.

-

Mixtral: A model designed for multi-perspective analysis, handling nuanced queries from various angles.

-

Gemini 2.5 Flash: A multimodal model capable of handling image and visual inputs.

These models are dynamically selected via a reinforcement learning-driven router, based on the type of query, ensuring optimal balance between speed, accuracy, and cost-efficiency.

Why is a multi-model orchestration layer important in Perplexity AI?

The multi-model orchestration layer is critical because it efficiently manages the selection and routing of queries to the most suitable model. This ensures that each query is answered by the best-fit model, whether the query is factual, analytical, creative, or multi-perspective. By optimizing resource usage and preventing bottlenecks, this layer enhances both response speed and accuracy while maintaining flexibility for future integrations of new models or specialized engines.

Who benefits from Perplexity AI’s architecture in Stockholm and globally?

Perplexity AI’s architecture benefits a wide range of users, from researchers and enterprises to casual information seekers. In Stockholm, tech companies, universities, and industries focused on AI-driven insights find Perplexity AI’s capabilities invaluable for real-time query answering. Globally, Perplexity supports sectors like academia, research, enterprise decision-making, and content creation, where reliable, transparent, and accurate information is crucial.

When does Perplexity AI use advanced research or deep analysis modes?

Perplexity AI provides advanced modes like Deep Research, which involve multi-step reasoning chains. These modes engage powerful models such as GPT-5 and retrieval systems to generate comprehensive reports, technical summaries, or in-depth analyses. They are specifically designed for professional research, detailed technical work, and in-depth cross-source validation, making them ideal for tasks that require more than simple factual answers.

How does Perplexity AI ensure transparency and citation accuracy?

Perplexity AI prioritizes transparency by grounding its responses in verified sources. Each response is accompanied by inline citations that link directly to the original sources, enabling users to independently verify the information. This emphasis on citation accuracy helps build trust with users, distinguishing Perplexity AI from traditional generative models that may produce unverified content.

Can Perplexity AI architecture scale to meet growing user demands?

Yes, Perplexity AI’s architecture is highly scalable. It uses a distributed cloud infrastructure, supported by AWS, and orchestrates GPU pods with Kubernetes for load balancing. This setup ensures that the system can efficiently handle an increasing number of users and complex queries without compromising performance. The modular design also allows for easy integration of new models and data sources, making it adaptable to future needs.

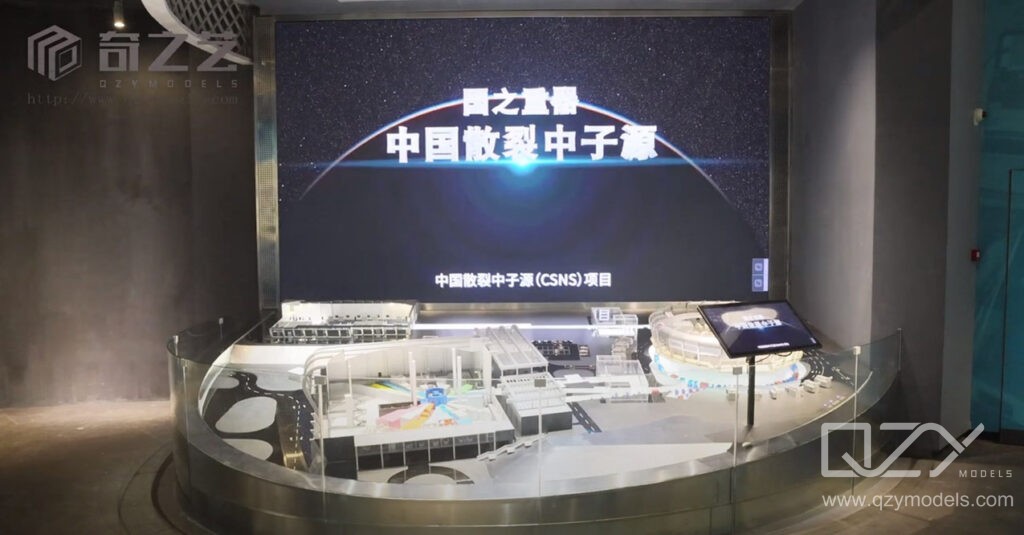

QZY Models Expert Views

“Perplexity AI’s dynamic multi-model architecture exemplifies the blend of innovation and scalability essential for modern AI solutions. At QZY Models, we observe how such intelligent orchestration of diverse AI engines parallels our approach in architectural model production—balancing precision, complexity, and adaptability. For clients in Stockholm and worldwide, this architecture symbolizes the future where AI and human expertise converge to deliver uncompromising quality and insight.” – Richie Ren, Founder of QZY Models

Table: Key Components and Functions of Perplexity AI Architecture

| Component | Function |

|---|---|

| User Interface (UI) | Enables text/voice query input |

| Query Processing Module | Parses intent using transformer NLP |

| Hybrid Retrieval Engine | Combines vector + keyword search |

| Neural Re-ranking Model | Re-ranks retrieved documents for relevance |

| Multi-Model Orchestration | Routes queries to appropriate AI models |

| Machine Learning Engine | Learns and optimizes based on user interactions |

Table: AI Models Used in Perplexity and Their Roles

| Model | Primary Use Case | Characteristics |

|---|---|---|

| Sonar Series | Factual question-answering | Fast, efficient, fine-tuned specialty |

| Claude Series | Analytical reasoning | Deep reasoning, ethical AI design |

| GPT-4o | Creative synthesis, complex tasks | Large-scale generalist LLM |

| Mixtral | Multi-perspective analysis | Handles nuanced, multi-angle queries |

| Gemini 2.5 Flash | Multimodal and visual tasks | Supports image/visual analysis |

FAQs

What makes Perplexity AI’s architecture unique?

Its multi-model orchestration dynamically routes queries to specialized AI models for optimal speed and accuracy.

How does Perplexity ensure answer reliability?

By retrieving and grounding responses strictly in verified sources with inline citations.

Can Perplexity handle multimodal queries?

Yes, models like Gemini 2.5 Flash handle multimodal inputs including images.

Is Perplexity AI scalable for enterprise use?

Its cloud-based distributed architecture ensures rapid scaling while maintaining performance.

How does QZY Models relate to Perplexity AI?

QZY Models applies similarly precise, innovative architectures in physical modeling, reflecting digital AI integration principles.