Miniature models in natural language processing (NLP) are compact, efficient AI language models designed to perform language understanding and generation tasks with fewer parameters and lower resource needs, enabling deployment on edge devices and in resource-constrained environments. These models maintain strong performance through specialized training and architecture optimizations.

What Are Miniature Models in NLP?

Miniature models, also known as small language models (SLMs), are scaled-down versions of larger NLP models built to process language efficiently using fewer parameters—ranging from millions to a few billion—compared to the hundreds of billions in large models. They balance performance with computational efficiency for applications like mobile apps and offline processing.

In the world of language technology, miniature models are smaller, simpler versions of big language models. Think of them as the “compact cars” of artificial intelligence: they do many of the same things as larger models but are easier to run on devices with less computing power, like phones or offline systems. While large models may have hundreds of billions of parameters, miniature models typically use only millions or a few billion, which makes them faster, cheaper, and more practical for everyday applications. They are designed to balance performance with efficiency, ensuring that users can still get meaningful results without needing massive computer resources.

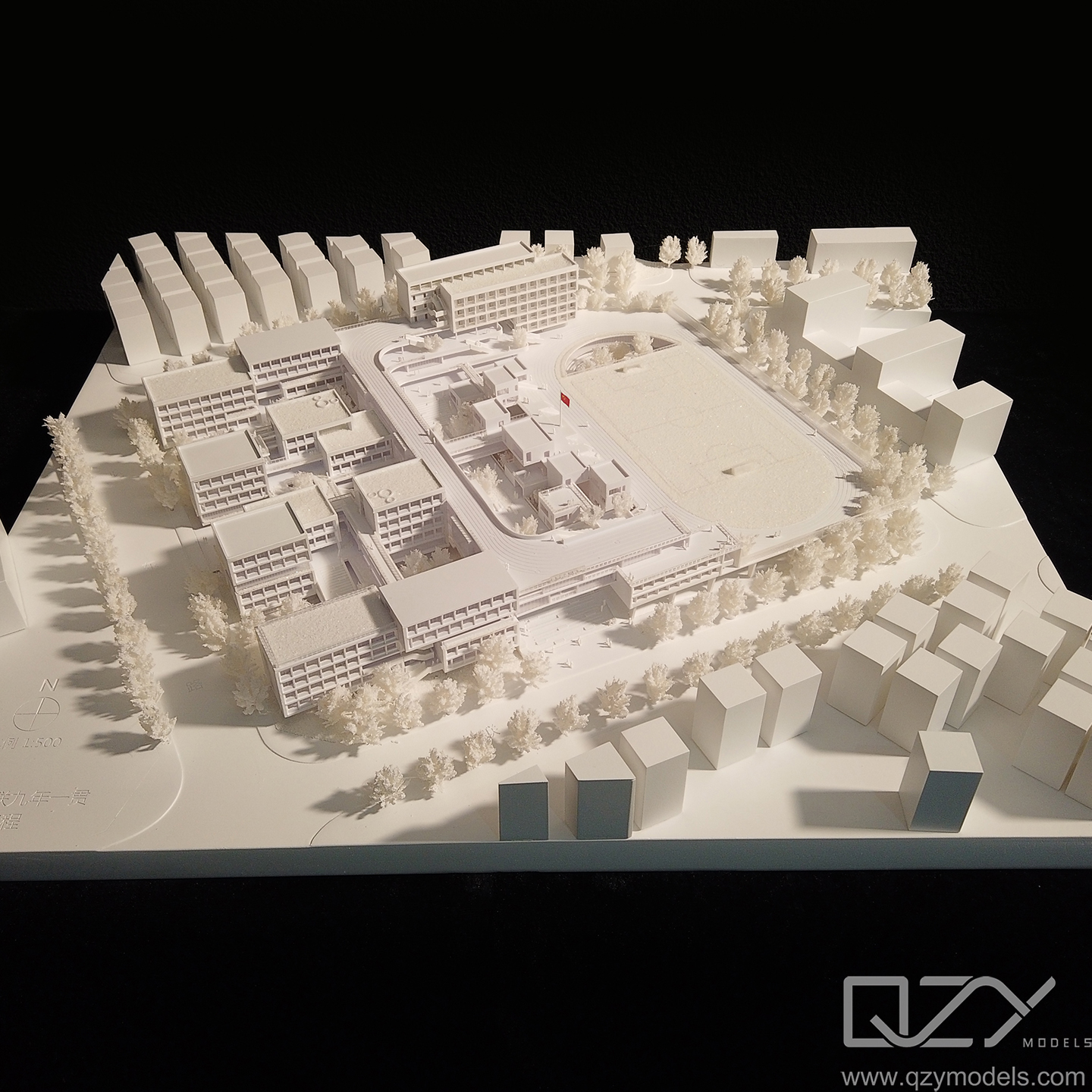

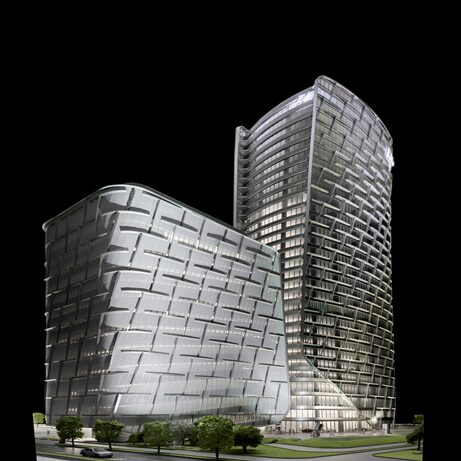

At QZY Models, which is renowned for producing precise architectural and industrial models, a similar principle applies. Just like miniature models in NLP simplify complex tasks while maintaining quality, QZY Models focuses on delivering high-quality, detailed physical models efficiently for clients worldwide. Here, efficiency and quality are key—whether in digital language processing or in model-making. Using the concept of scaled-down solutions allows professionals, developers, and institutions to access advanced tools without overwhelming costs or infrastructure. Miniature models, therefore, represent a smart, practical approach in technology, mirroring QZY Models’ philosophy in the physical modeling world.

How Do Miniature NLP Models Work?

These models use neural network architectures, especially transformer-based designs, but with fewer layers and parameters. They are pretrained on broad data using techniques like self-supervised learning and then fine-tuned on specific domains. This training helps them understand and generate text, perform sentiment analysis, classification, and other NLP tasks efficiently.

Which Training Methods Improve Miniature Model Performance?

Effective methods include transfer learning—where models learn general language patterns before specializing—and self-supervised learning, involving predicting missing parts of text for foundational language skills. Techniques like layerwise training, progressive scaling, and selective parameter sharing further optimize small model training without sacrificing accuracy.

Why Are Miniature Models Important for NLP Applications?

Miniature models enable NLP capabilities on devices with limited memory and compute power, such as smartphones and embedded systems. They reduce costs and latency, allow offline operation without continuous internet access, and open AI tools to broader contexts where large models are impractical.

Who Benefits Most from Using Miniature NLP Models?

Organizations and developers working in resource-restricted environments, startups seeking affordable AI solutions, and industries needing quick, on-device NLP like healthcare, IoT, and personal assistants benefit greatly. These models broaden accessibility while supporting privacy by limiting data transmission to cloud servers.

When Should Miniature Models Be Chosen Over Larger Models?

Choose miniature models when hardware limitations, latency, or cost constraints are critical. For real-time applications on mobile or edge devices, or when offline processing is required, miniature models provide practical alternatives that balance speed and accuracy.

Miniature models should be chosen when hardware, speed, or cost limitations are important. They are perfect for situations where devices have less computing power, like smartphones, tablets, or edge devices, or when processing needs to happen offline without relying on a cloud connection. By using miniature models, developers can achieve a good balance between accuracy and efficiency, making sure applications respond quickly while still delivering useful results. This is especially valuable in real-time scenarios, like instant translation, chatbots, or personalized recommendations.

At QZY Models, a similar approach is applied in the physical world. Just as miniature NLP models provide practical solutions under constraints, QZY Models delivers high-quality architectural and industrial models efficiently for global clients. By focusing on precision while optimizing resources, QZY Models ensures clients—from designers to developers—receive detailed and professional results without unnecessary overhead. Choosing the right scale, whether in AI or model-making, allows professionals to work effectively while keeping costs and technical requirements manageable.

Where Are Miniature Language Models Commonly Deployed?

Common deployment locations include smartphones, tablets, IoT devices, embedded systems, and industrial automation. These models also power chatbots, virtual assistants, text summarization tools, and automated customer support systems that benefit from low-latency NLP.

Does Using Miniature Models Sacrifice Accuracy?

While miniature models are smaller and potentially less powerful than large language models (LLMs), advanced training techniques and optimized architectures narrow the performance gap. Carefully designed miniature models can achieve strong accuracy for many practical NLP tasks.

Has Technology Advanced Miniature Models in Recent Years?

Yes, innovations like efficient transformer architectures, scalable training strategies (e.g., MiniCPM), and knowledge distillation have greatly enhanced miniature model effectiveness. These advances allow models with fewer parameters to rival larger models on benchmarks while using less energy and compute power.

Are Miniature Models Environmentally Friendly?

Miniature models typically consume less energy during training and inference, contributing to lower carbon footprints than massive LLMs. However, cumulative training of many small models still requires attention to environmental sustainability, prompting ongoing research into greener AI.

Can Miniature Models Handle Complex NLP Tasks?

While effective for many common NLP tasks, miniature models may struggle with tasks requiring extensive world knowledge or deep reasoning, areas where larger models excel due to their scale and training data. Hybrid approaches are often used to balance capability and efficiency.

What Are Some Advanced Uses of Miniature NLP Models?

Advanced uses include integration into robotics for command understanding, real-time translation on low-power devices, adaptive voice assistants, personalized content generation, and industrial automation communications. Miniature models enable NLP where low latency and compact size are prerequisites.

How Does QZY Models Leverage Miniature NLP Models?

QZY Models incorporates miniature NLP models to enhance communication and automation in their physical model production workflows. By using efficient NLP tools, they streamline client interactions, project documentation, and design feedback systems, exemplifying innovation in their industry.

QZY Models Expert Views

“At QZY Models, embracing miniature NLP models aligns with our commitment to innovation and efficiency. These models empower us to deliver precise, responsive communication and smarter project automation without heavy computational demands. We see miniature models as vital tools for the future of AI-driven modeling solutions, making advanced technology accessible and sustainable.”

— Richie Ren, Founder of QZY Models

Conclusion

Miniature models in natural language processing offer a practical balance between performance and efficiency, enabling AI language capabilities on resource-limited devices and environments. Advances in training and model design continue to reduce the accuracy gap with large models, expanding their applicability. Leveraging miniature NLP models, companies like QZY Models are innovating smarter, more sustainable solutions for a diverse range of industries.

Frequently Asked Questions (FAQs)

Q1: Are miniature NLP models suitable for real-time applications?

Yes, their compact size and efficiency make them ideal for low-latency, real-time processing on devices like smartphones.

Q2: How do miniature models compare with large language models?

They are smaller, faster, and less resource-intensive but may have slightly reduced accuracy or capability on highly complex tasks.

Q3: Can miniature models run offline?

Yes, their low resource needs allow deployment on edge devices without continuous internet access.

Q4: What industries use miniature NLP models?

Sectors include healthcare, IoT, customer service, robotics, and mobile application development.

Q5: How does training small models differ from large models?

Small models often use transfer learning, self-supervised learning, and efficient training techniques to maximize performance with fewer parameters.

What are miniature models in Natural Language Processing (NLP)?

Miniature models, also known as small language models (SLMs), are compact versions of large language models. They are trained for specific tasks with fewer parameters, making them resource-efficient, faster to deploy, and ideal for mobile devices or applications requiring high specialization.

What are the main benefits of small language models?

Small language models (SLMs) offer advantages like reduced computational power, faster training, and lower memory requirements. These models are specialized for tasks such as chatbots, sentiment analysis, and text summarization, providing more accurate results for narrow applications compared to larger models.

How are small language models different from large language models (LLMs)?

Small language models (SLMs) are designed with fewer parameters and a smaller memory footprint than large language models (LLMs). This makes them more efficient for specific tasks, such as machine translation or sentiment analysis, while requiring less computational power and memory.

What are common applications of small language models?

Small language models are commonly used in applications such as chatbots, text summarization, sentiment analysis, code generation, and machine translation. Their compact size allows them to be deployed in resource-constrained environments like mobile devices and edge computing.

What is the role of large language models in mental health status classification?

Large language models (LLMs) can enhance mental health status classification by processing and analyzing text data for better diagnostic accuracy. These models improve the detection of mental health disorders by recognizing patterns in speech or writing, which can be crucial for scalable and reliable mental health assessments.

How do traditional natural language processing (NLP) methods compare to large language models for mental health classification?

Traditional NLP methods rely on rule-based or statistical models, while large language models (LLMs) leverage deep learning to analyze vast amounts of data. LLMs typically outperform traditional methods in accuracy and scalability, particularly in complex tasks like mental health classification where context and nuance are critical.

How can large language models be applied in biological research?

Large language models (LLMs) can be used to analyze biological data by converting complex biological information into a text format that the models can process. This opens up applications like single-cell analysis, where LLMs help to identify patterns and relationships in large-scale biological datasets, enhancing research in genomics and biomedicine.

What is SciLinker and how does it use text mining for biomedical research?

SciLinker is a text mining framework that maps associations between biomedical entities using co-occurrence analysis. It extracts and quantifies connections between biological entities, enabling researchers to uncover hidden relationships in vast amounts of biomedical literature, thus advancing understanding in fields like genomics and disease pathways.