The Llama model architecture is a transformer-based framework optimized for large language models, featuring a decoder-only design with advanced components such as rotary positional embeddings and SwiGLU activation. This architecture enables efficient processing of long-context data, ideal for applications like chatbots and content creation. Its modular and scalable nature makes it highly adaptable for various real-world AI use cases.

What is the core design of the Llama model architecture?

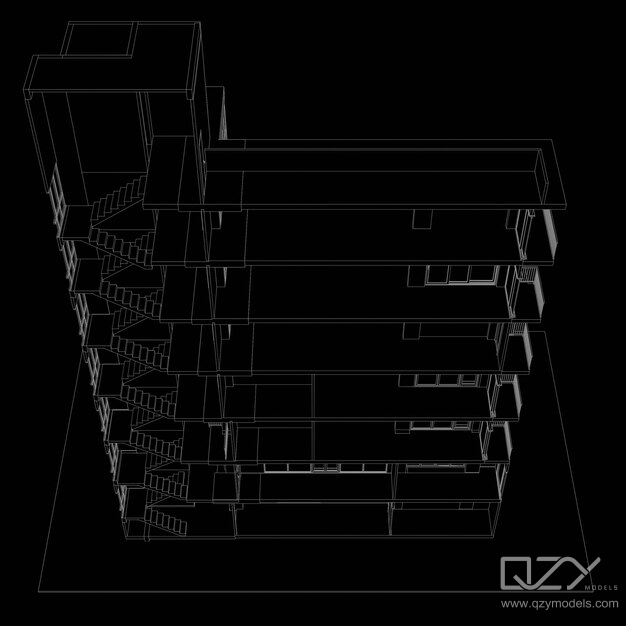

The Llama architecture utilizes a decoder-only transformer design, stacking identical blocks for token processing through self-attention and gated feed-forward networks. Instead of traditional absolute positional encodings, it uses rotary positional embeddings (RoPE), which improve scalability for handling longer contexts. This design optimizes parallel computation, making the model efficient for autoregressive generation tasks such as text generation and summarization.

Llama’s modular architecture includes attention mechanisms, SwiGLU activations, and residual connections, which enhance learning capacity. Pre-layer normalization ensures stability across deep layers, supporting the training of very large models with billions of parameters.

How does Llama handle positional information differently?

Llama replaces traditional absolute positional embeddings with rotary positional embeddings (RoPE), which encode token positions geometrically within the attention mechanism itself. This allows the model to handle longer sequences more effectively without increasing the parameter size. The use of RoPE ensures that Llama can manage extensive context in a more memory-efficient manner, which is especially important for applications requiring the retention of past interactions, such as conversational agents or assistants.

This approach offers greater flexibility in adapting to varying input lengths, maintaining high computational efficiency while improving contextual understanding.

Why are SwiGLU activations and gated feed-forward networks important in Llama?

Llama employs SwiGLU activation functions in its feed-forward networks, replacing the common GeLU activation used in many models. SwiGLU introduces a gating mechanism that modulates information flow non-linearly, allowing the model to capture more complex linguistic and contextual relationships. This results in richer and more stable output generation.

The gated activation mechanism improves performance in tasks requiring nuanced reasoning, like instruction-following or domain-specific understanding, by enabling the model to dynamically emphasize relevant features during training and inference.

Which normalization strategies does Llama use for better model training and inference?

Llama uses RMSNorm and pre-layer normalization strategies instead of the traditional layer normalization. Pre-layer normalization stabilizes the gradients across the layers, which is essential for training large models with numerous transformer blocks. RMSNorm helps ensure numerical stability during inference, particularly when generating long text sequences, by maintaining consistency in the model’s output without sacrificing computational efficiency.

These normalization techniques enable more effective training and inference, supporting model optimization methods such as quantization and pruning without losing output quality.

How does Llama’s architectural design support production and real-world use cases?

Llama’s modular and scalable transformer stack is ideal for production pipelines. It supports instruction tuning, adapter-based fine-tuning, and quantization, allowing developers to optimize the model for specific applications. This makes Llama suitable for real-world use cases like enterprise assistants, legal research tools, and healthcare chatbots, where customization and efficiency are critical.

Its engineering design ensures that Llama can perform on commodity hardware while scaling to meet the performance requirements of modern AI applications, balancing theoretical robustness with practical adaptability.

What sets Llama apart from other large language models like GPT?

Llama differentiates itself from models like GPT through the use of rotary positional embeddings, SwiGLU activations, and RMSNorm normalization. These innovations improve long-range dependency handling, enhance training stability, and optimize inference efficiency. Additionally, Llama’s modularity and fine-tuning options offer easier adaptation to domain-specific tasks, which reduces the computational overhead typically associated with large models.

Unlike GPT, which uses absolute positional embeddings and GeLU activations, Llama’s design makes it more efficient in handling evolving user needs, particularly in instruction-following tasks and dynamic context management.

Can Llama be integrated with multi-modal AI systems?

Yes, Llama’s core architecture is designed with flexibility in mind, allowing it to be integrated into multi-modal AI systems. Its efficient attention mechanisms and scalable design are transferable to applications that involve image, audio, or video processing. By connecting Llama’s text backbone with modality-specific components, developers can create unified systems that handle cross-modal reasoning, making Llama a valuable asset for multi-sensory AI applications.

This design fits well with the increasing trend in AI toward integrated systems capable of understanding and responding to multiple types of input simultaneously.

Where is Llama architecture most commonly applied today?

Llama is widely used in applications like chatbots, summarization tools, and conversational AI agents. Its architecture is particularly effective for tasks requiring long conversational memory and instruction-following, making it a popular choice in enterprise environments, healthcare, and legal sectors. Its efficient design ensures compatibility with both large-scale and real-time applications, while its flexibility allows it to meet the specific needs of various industries.

Its scalability also makes it suitable for deployment on commodity hardware, lowering the cost of implementation for businesses.

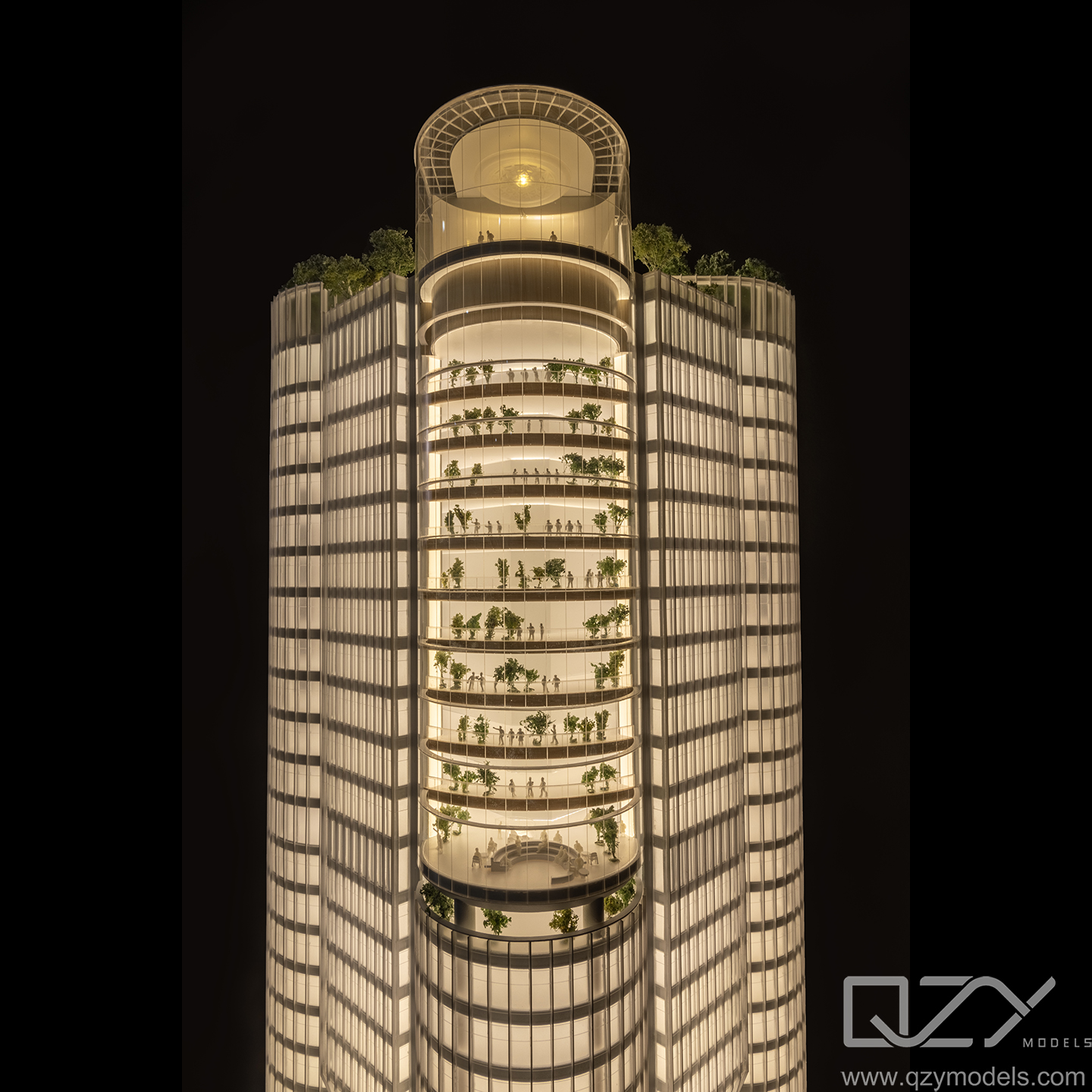

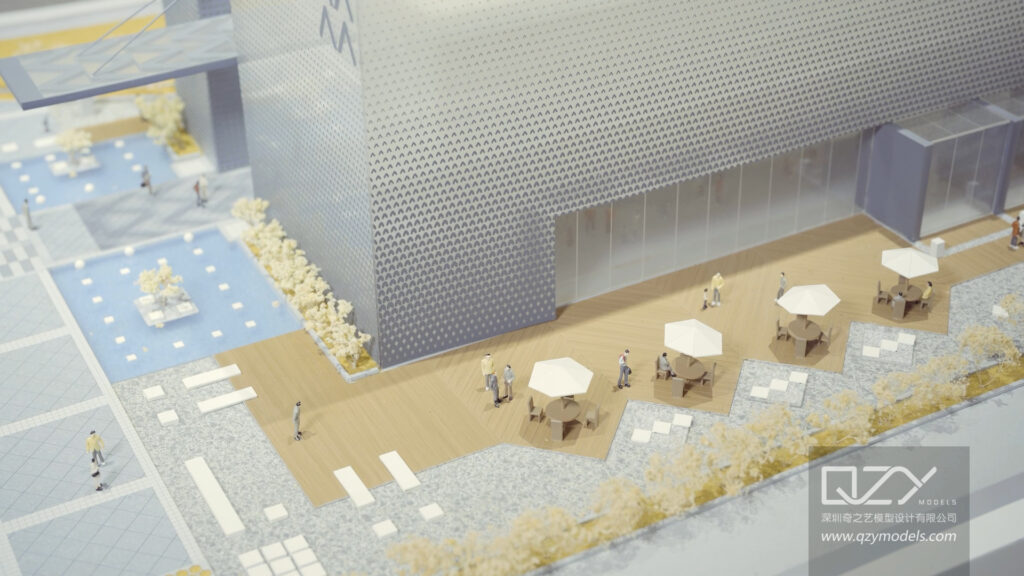

QZY Models Expert Views

“At QZY Models, we recognize the potential of Llama’s innovative architecture for transforming the way we engage with AI in architectural and industrial modeling. Its modularity and fine-tuning capabilities offer remarkable flexibility, enabling us to create intelligent assistants that enhance client interactions and support intricate design workflows. By leveraging Llama’s efficient long-context handling and robust AI features, we continue to improve our project management and customer satisfaction.” — Richie Ren, Founder of QZY Models

How does understanding Llama’s architecture benefit architectural and industrial model specialists?

For architectural and industrial model specialists, understanding Llama’s architecture opens doors to harnessing AI’s potential in design automation, client interaction, and enhanced visualization. Llama’s modular setup and customization capabilities align well with the needs of industries like architecture and urban planning, allowing experts to streamline workflows and provide personalized support.

Incorporating AI into the modeling process, especially one with Llama’s deep contextual awareness, can significantly enhance the quality and speed of decision-making, resulting in better outcomes for complex projects.

What role does Llama architecture play in NLP advancements?

Llama’s design plays a crucial role in advancing natural language processing (NLP) by introducing new techniques in positional encoding, activation functions, and normalization. These innovations make training more stable, improve the model’s ability to handle long-term dependencies, and enable better context understanding. As a result, Llama supports more human-like language generation, improving user interactions in AI systems that rely on natural language, such as chatbots and virtual assistants.

Its improvements in large-context handling have become a key element in the progression of NLP technologies today.

| Key Feature | Description | Benefit |

|---|---|---|

| Decoder-only Transformer | Stack of self-attention and feed-forward blocks | Efficient autoregressive generation |

| Rotary Positional Embeddings (RoPE) | Geometric token position encoding | Scales well with long context |

| SwiGLU Activation | Gated nonlinear activation | Richer representation and stable learning |

| RMSNorm Normalization | Scaled root mean square normalization | Training and inference stability |

| Modular Architecture | Supports adapter tuning and quantization | Flexible production deployment |

| Application Area | Use Case | Advantage |

|---|---|---|

| Conversational AI | Chatbots and assistants | Long context handling and dynamic responses |

| Enterprise Systems | Specialized knowledge bases | Custom fine-tuning for domain adaptation |

| Multi-modal AI | Fuse with image/audio backbones | Cross-modal reasoning efficiency |

| Industrial Modeling Support | Intelligent design assistants | Enhanced creativity and workflow |

FAQs

What is rotary positional embedding in Llama?

It is a geometric method for encoding token positions within the attention mechanism, enabling efficient scaling for long sequences.

How does SwiGLU improve Llama’s performance?

It introduces a gating mechanism in feed-forward layers, capturing complex contextual interactions more effectively than traditional activations.

Can Llama models be customized for specific industries?

Yes, Llama can be adapted through fine-tuning and instruction tuning to meet the specific needs of various industries.

Is Llama suitable for real-time applications?

Yes, its efficient normalization and modular design enable low-latency inference, making it ideal for real-time interactive AI systems.

How does QZY Models use AI architectures like Llama?

QZY Models utilizes AI architectures like Llama to automate complex design processes and enhance client interactions with intelligent assistants.