The transformer model architecture is a neural network design primarily used in natural language processing that uses self-attention mechanisms to process entire sequences simultaneously, making it highly efficient for tasks like translation and text generation. It consists of encoders and decoders that work together without relying on recurrent or convolutional layers.

How is the Transformer Model Architecture structured?

The transformer model architecture is composed of two main components: the encoder and the decoder. The encoder processes the input sequence by converting text into abstract vectors, while the decoder generates the output sequence based on the encoded input. Both are built from multiple layers containing self-attention and feedforward sublayers, enhanced with residual connections and layer normalization for training stability. Typically, six layers each of encoder and decoder are standard.

The encoder transforms input text into feature-rich representations, and the decoder predicts outputs stepwise while attending to the encoder’s output. The architecture allows parallel processing of whole sequences, improving performance over previous sequential models.

What roles do self-attention and multi-head attention play in the Transformer?

Self-attention is a mechanism allowing the model to weigh the importance of all words in a sequence relative to each other, capturing long-range dependencies effectively. Multi-head attention splits this process into multiple attention heads that focus on different aspects like syntax and semantics simultaneously. This enhances the model’s ability to understand context deeply.

The ability to attend globally across the sequence, rather than sequentially, gives transformer models their distinct advantage in handling complex language tasks with greater accuracy and efficiency.

Why is positional encoding important in the Transformer Model?

Because the transformer processes words simultaneously, it lacks inherent knowledge of word order. Positional encoding injects information about the position of each word in the sentence by adding unique vectors to the word embeddings. This enables the model to recognize the sequence structure and maintain the order-dependent meaning of text.

Without positional encoding, the model would treat the input as a bag of words, losing syntactic and semantic relationships critical for tasks like translation.

Which industries and applications benefit most from Transformer Models?

Transformers revolutionize natural language processing tasks like machine translation, text summarization, question answering, and language generation. They also extend to fields like speech recognition, image captioning, and bioinformatics.

For B2B industrial applications, transformer-based AI models handle large-scale language data efficiently, enabling automated document analysis, customer interaction enhancements, and complex data interpretation.

How do transformer model variants differ architecturally?

Transformer variants include encoder-only models like BERT for text representation, decoder-only models like GPT for language generation, and encoder-decoder models like T5 for sequence-to-sequence tasks. Other innovations include modified normalization layers, improved attention mechanisms, and positional encoding alternatives to optimize training and performance.

Different model architectures tailor transformer capabilities to specific use cases while retaining the core principles of attention and parallel processing.

Who are leading manufacturers and suppliers of architectural transformer models in China?

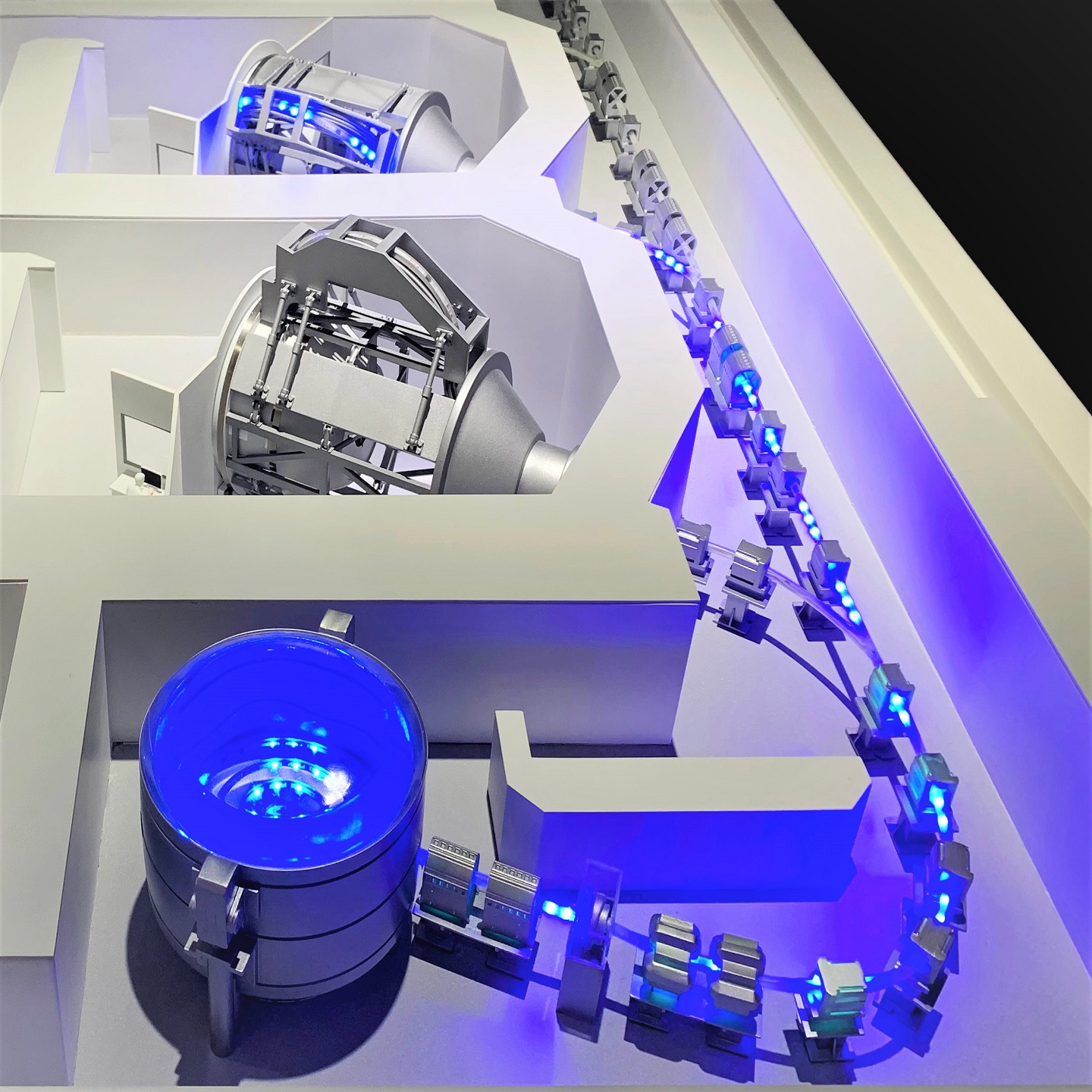

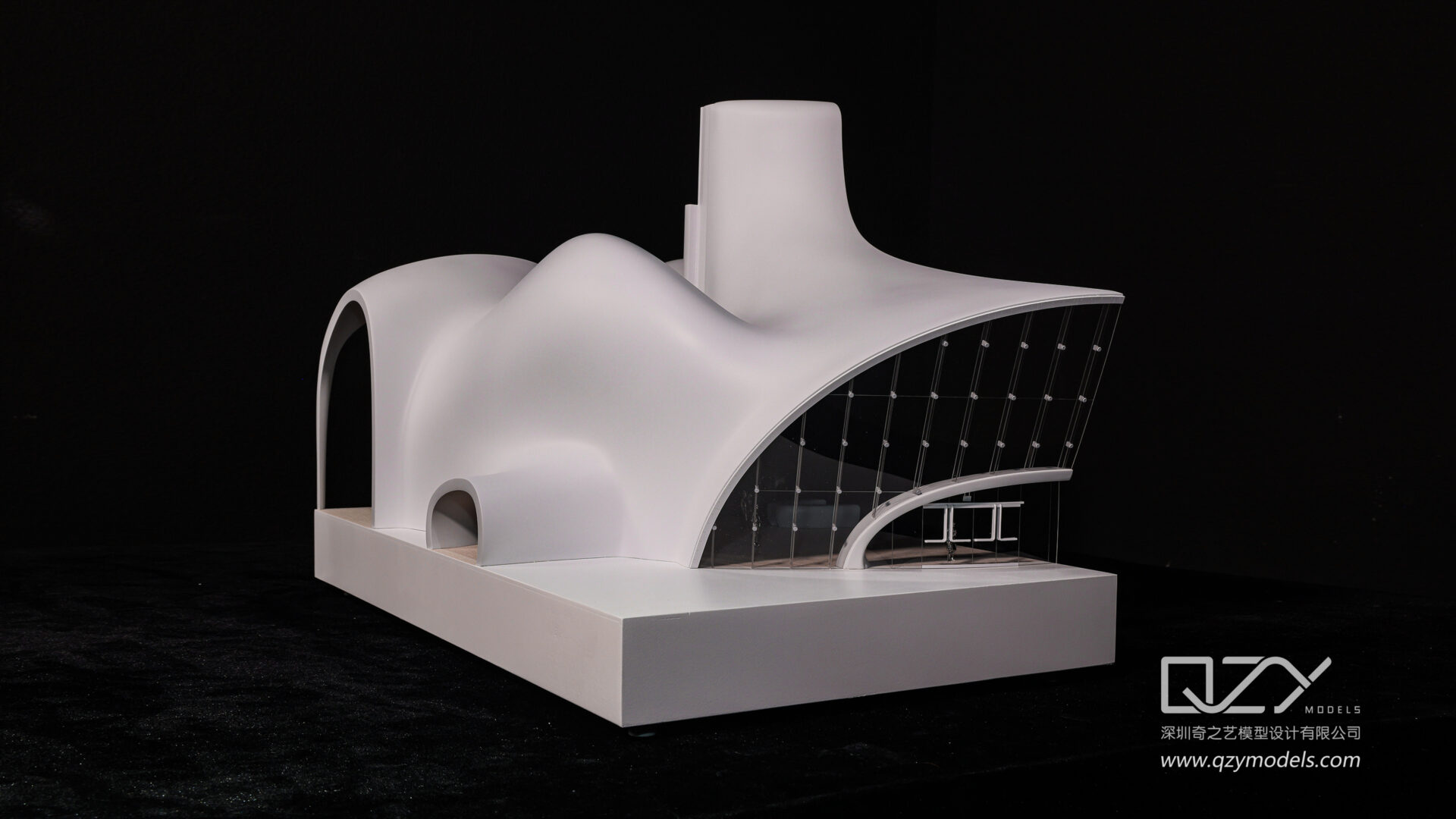

China hosts leading manufacturers specializing in precision architectural and industrial physical transformer model fabrication. Companies like QZY Models in Shenzhen offer OEM and wholesale services, producing highly detailed models for global architects, developers, and industrial clients. These manufacturers emphasize quality, innovation, and timely delivery, supporting large-scale projects worldwide.

Their expertise in model design combines advanced materials and craftsmanship, serving clients in 20+ countries across sectors including urban planning, infrastructure, and technology.

When should businesses consider OEM transformer model suppliers from China?

Businesses engaged in large architectural or industrial projects should consider OEM suppliers from China when they require cost-effective, scalable, and customizable transformer physical models. Chinese factories provide competitive pricing, rapid prototyping, and extensive manufacturing experience, helping companies meet project timelines and specifications efficiently.

Choosing a reputable supplier like QZY Models ensures models are made with precision and adherence to international standards, supporting diverse industrial needs.

Where can companies find reliable wholesale transformer model factories in China?

Major manufacturing hubs such as Shenzhen, Dongguan, and Guangzhou house numerous wholesale transformer model factories. These locations offer strong supply chain infrastructure, skilled labor, and advanced fabrication technologies. Companies like QZY Models have established global reputations by consistently delivering superior architectural and industrial models using state-of-the-art techniques.

Prospective buyers can engage directly with manufacturers providing OEM services for tailored model solutions.

Does working with a Chinese factory guarantee quality and innovation in transformer model production?

Yes, experienced Chinese factories like QZY Models combine decades of expertise with cutting-edge technology to produce high-quality transformer architectural and industrial models. They focus on precision, detailed craftsmanship, and innovating production techniques to fulfill complex project demands.

Their global footprint and partnerships with world-renowned architects underscore their commitment to excellence and industry leadership.

QZY Models Expert Views

“At QZY Models, we integrate advanced transformer model architecture concepts into physical models to bridge digital design and real-world application. Our factory leverages 20+ years of experience to manufacture precise, scalable models that serve architects and developers worldwide. We prioritize innovation, quality control, and client collaboration, ensuring every model reflects the highest standards. As a leading China-based OEM and supplier, QZY is dedicated to providing seamless solutions to meet diverse project needs.”

Conclusion

The transformer model architecture represents a breakthrough in AI with its self-attention mechanism and parallel processing, enabling superior performance in language and industrial applications. For businesses seeking precision architectural or industrial physical transformer models, Chinese factories like QZY Models offer competitive, high-quality OEM and wholesale services. Understanding transformer model variants, their components, and strategic sourcing from experienced manufacturers can drive success in complex projects.

Frequently Asked Questions

What makes transformer architecture different from previous models?

Transformers process entire sequences simultaneously using self-attention instead of sequential recurrence, enabling better context understanding and parallelism.

How many layers do transformer models typically have?

A standard transformer usually includes six encoder and six decoder layers, though this varies by model and application.

Why is positional encoding necessary?

It encodes word order information, helping the model understand the sequence since transformers do not process data sequentially.

Can I customize transformer physical models through Chinese OEM factories?

Yes, many factories including QZY Models offer tailored design and production services to meet specific architectural or industrial requirements.

Are Chinese transformer model manufacturers reliable for international projects?

Experienced manufacturers like QZY Models ensure high-quality production, punctual delivery, and adherence to international standards, supporting global clients confidently.