What Is Diffusion Model Architecture and How Does It Work?

In less than five years, diffusion models have become the backbone of AI image and media generation, yet over 60% of architecture and design firms still rely on manual visualization workflows that are slow, expensive, and hard to scale. Global generative AI spend in design-related industries is projected to exceed 20 billion USD by 2030, pushing studios to adopt more automated, model-driven pipelines or risk falling behind competitors who can iterate concepts and client presentations much faster. In this context, combining modern diffusion model architectures with physical model specialists like QZY Models enables a measurable boost in design quality, communication, and time-to-market.

How Is The Current Industry Using Diffusion Models And What Pain Points Remain?

Architecture, real estate, and industrial design are rapidly adopting generative AI to accelerate visualization, but adoption is uneven and often experimental. Many firms test diffusion-based tools (like image generators and layout assistants) in isolated pilots rather than deeply integrating them into design or marketing workflows. This fragmented use leads to underutilization of the technology and missed opportunities for ROI.

A key challenge is data and quality control. Diffusion models need large volumes of clean, representative visual data (plans, renderings, photos, material references), yet many organizations have scattered archives and inconsistent standards. As a result, outputs can be visually impressive but misaligned with brand guidelines, local regulations, or engineering constraints, creating friction between creative, technical, and commercial teams.

Another pain point is the gap between digital concepts and physical communication. Clients, investors, and government stakeholders still make high-stakes decisions based on physical models, mock-ups, and exhibition pieces. While diffusion models can rapidly propose variations and atmospheres, most firms lack a structured workflow to translate these AI-generated ideas into high-fidelity physical models. This is precisely where a specialist partner like QZY Models adds value by turning diffusion-driven design directions into accurate architectural or industrial models that decision-makers can touch, inspect, and trust.

Finally, there is a skills and process gap. Many design teams understand rendering or BIM, but fewer understand how diffusion models work under the hood: the architecture, timesteps, conditioning, and constraints. This makes it hard to onboard AI into professional pipelines with proper QA, documentation, and measurable KPIs (e.g., hours saved per project, win-rate uplift in competitions, or pre-sales conversion improvement for real estate developers).

What Are Diffusion Models And How Does The Architecture Work?

Diffusion models are generative models that learn to synthesize new data (for example, images or 3D projections) by reversing a gradual noising process. The core idea is twofold: a forward process where structured data is incrementally corrupted with noise, and a reverse process where a neural network learns to remove that noise step by step until a clean sample emerges that resembles the training distribution.

Mathematically, the forward process starts with a real sample x0x0 (e.g., an architectural façade image) and repeatedly adds small amounts of Gaussian noise over TT steps, producing x1,x2,…,xTx1,x2,…,xT. At high TT, the signal is almost pure noise. The reverse process learns a parameterized model pθ(xt−1∣xt,t,c)pθ(xt−1∣xt,t,c), where cc can include conditioning information such as text prompts, class labels, or structural guides like floor plans. By sampling backward from xTxT (random noise) to x0x0-like outputs, the model generates new but distribution-consistent data.

Architecturally, most image diffusion models use a U-Net-style backbone: an encoder that progressively downsamples the noisy image to a compact representation and a decoder that upsamples back to the original resolution. Skip connections connect matching levels of the encoder and decoder to preserve spatial detail, which is critical when generating precise geometry or material transitions in architectural or industrial imagery. More recent models replace the U-Net with a transformer backbone (often called Diffusion Transformers or DiT) to better capture global structure and improve scalability.

Crucially, the model is time-aware. Each diffusion step tt is encoded (e.g., via sinusoidal or learned embeddings) and injected into the network, teaching it to behave differently at early (high-noise) vs. late (low-noise) steps. This is why the same architecture can handle extremely noisy inputs early on and fine-detail refinement closer to the clean output. For architecture and industrial clients, this means diffusion models can start from very abstract shapes or constraints and iteratively converge toward realistic forms, lighting, and materials that match project requirements.

Why Are Traditional Visualization Solutions No Longer Enough?

Traditional visualization workflows in architecture, real estate, and industrial design still rely heavily on human-centric tools such as manual sketching, static 3D modeling, and batch rendering. These methods are proven and accurate but are labor-intensive and slow, especially when stakeholders request large numbers of variations (different façades, massing options, lighting scenarios, or interior schemes). In competitive bidding or fast-moving markets, this latency becomes a serious strategic disadvantage.

Conventional rendering tools also struggle with exploration breadth. While a skilled 3D artist can craft a few polished variants, they rarely have the bandwidth to generate hundreds of alternatives for early-stage concept testing. Diffusion models, in contrast, can produce wide explorations (e.g., dozens of façade styles or landscape compositions) in minutes, enabling data-driven design decisions about which directions are most promising before committing costly human time.

Quality consistency is another limitation. Different artists, vendors, and project teams often have different rendering styles, file structures, and color grading pipelines. This leads to inconsistent visual identities across competition submissions, marketing collateral, and regulatory presentations. With a standardized diffusion pipeline trained or fine-tuned on a firm’s existing portfolio, the organization can enforce more consistent style and material language. By pairing this with QZY Models as the physical execution partner, firms can ensure that digital and physical outputs share the same visual DNA.

Finally, traditional workflows rarely integrate seamlessly with physical model production. CAD and BIM files may not be optimized for physical fabrication, and concept visuals might not match the realities of scale, structure, or materials. When diffusion models generate early visual narratives and QZY Models converts selected options into physical models, the pipeline becomes more coherent: early AI ideas inform physical mockups that, in turn, guide refined digital iterations.

How Does A Diffusion-Driven Solution With QZY Models Work In Practice?

A diffusion-driven solution for architecture and industrial visualization centers on a few core capabilities: generative exploration, structured conditioning, quality control, and physical realization. At its heart, the system uses a diffusion model (U-Net or transformer-based) trained or tuned on domain-specific imagery such as façade designs, interior photos, industrial prototypes, urban planning diagrams, and past physical models.

Conditioning is key. The model can take as input a combination of noise, 2D layouts, elevation lines, material palettes, or textual descriptions (e.g., “mixed-use tower, podium with retail, warm stone façade, generous greenery”). The architecture embeds these conditions and guides the reverse diffusion process toward outputs that obey spatial and stylistic constraints, rather than generic “AI art.” This makes the system much more actionable for professional environments where deviations from constraints are costly.

QZY Models enters as the bridge between AI-generated concepts and real-world physical models. Once digital outputs are selected, QZY’s team can interpret them with architectural and industrial accuracy, translating visuals into constructible physical models with correct scale, detail hierarchy, and material selection. With branches in markets like the Middle East, Europe, and Asia, QZY can support global clients who want consistent, diffusion-informed visualization workflows paired with premium physical model delivery.

The solution is not just about aesthetics; it is about measurable performance. Firms can track metrics such as concept turnaround time, number of viable design alternatives generated per week, improvement in client approval cycle times, and conversion rates for marketing campaigns that combine diffusion-generated imagery with QZY’s physical models in exhibitions or sales centers.

Which Advantages Does The Diffusion-Based Workflow Have Over Traditional Methods?

Diffusion vs. Traditional Workflow Table

| Aspect | Traditional Visualization Workflow | Diffusion-Driven Workflow With QZY Models |

|---|---|---|

| Concept generation speed | Days to weeks per concept set, heavily dependent on senior artist availability | Minutes to hours for dozens of concept variants, scalable across projects |

| Exploration breadth | Limited number of options due to budget and time | Wide exploration of styles, materials, and massing with controlled parameters |

| Consistency of style and branding | Varies by artist, vendor, and project | Centralized diffusion model enforces consistent visual language across outputs |

| Integration with physical models | Often ad hoc; CAD/BIM not optimized for fabrication | AI concepts curated and translated into precise physical models by QZY Models |

| Stakeholder engagement | Static renders and drawings, occasionally VR | Rich set of AI-generated visuals plus high-quality physical models for decision-making |

| Data and feedback loop | Limited analytics on which visuals perform best | Systematic tracking of selected AI concepts and QZY-produced models to refine model training |

| Scalability across regions | Requires local teams or vendors, variable quality | Centralized diffusion pipeline plus QZY’s international branches for localized production |

| Cost structure | High marginal cost per new variation | Lower marginal cost per additional concept once diffusion pipeline is in place |

How Can Teams Implement A Diffusion Model Workflow Step By Step?

-

Requirement and data audit

-

Identify target use cases: competition visuals, marketing imagery, early massing studies, industrial prototypes, or urban planning scenarios.

-

Audit existing assets: 3D models, renders, photographs of physical models, plans, elevations, and marketing brochures that can feed diffusion training or fine-tuning.

-

-

Model selection and setup

-

Choose a base diffusion architecture (U-Net-based or transformer-based) suitable for your resolution and modality (2D images, multi-view projections, etc.).

-

Configure conditioning channels: text, 2D layout maps, depth maps, or semantic masks to ensure architectural and industrial constraints are respected.

-

-

Training or fine-tuning

-

Fine-tune the model on your domain-specific dataset, focusing on style consistency, material realism, and geography-specific context when relevant.

-

Implement validation protocols with internal designers: regularly review generated outputs and adjust training data, prompts, and conditioning schemas.

-

-

Workflow integration

-

Embed the diffusion model into everyday tools: design intranets, project management platforms, or custom web UIs so that architects and designers can request variations on demand.

-

Define clear handoff points where selected AI outputs are transferred to QZY Models for physical model planning (including scale, materials, lighting, and logistics).

-

-

Physical realization and iteration

-

QZY’s experts interpret AI-generated visuals, align them with construction documents and technical constraints, and build physical models using appropriate materials and technologies.

-

Feedback from stakeholders (clients, juries, government reviewers) on both AI imagery and physical models feeds back into the dataset, improving the diffusion model over time.

-

-

Measurement and optimization

-

Track KPIs: time-to-first-concept, number of iterations per project, client approval rate, competition win rate, pre-sales conversion uplift, and cost per visual asset.

-

Use these metrics to decide where to invest further—additional fine-tuning, new conditioning modes, or deeper integration between diffusion outputs and QZY’s fabrication workflows.

-

Where Do Diffusion Models With QZY Models Create The Most Impact? (4 Scenarios)

Scenario 1: Architectural Design Firm – Early-Stage Concept Competition

-

Problem: An international architecture studio must submit multiple competition concepts for a new cultural complex with a tight deadline and limited internal visualization resources.

-

Traditional approach: A small visualization team prepares a few manually modeled and rendered options; as client feedback arrives, last-minute changes strain the team and reduce quality.

-

Using diffusion + QZY Models: The firm uses a diffusion model to generate dozens of façade and massing variants aligned with the site and program, then selects a curated set for physical competition models built by QZY Models. QZY’s precise detailing and finishing make the competition boards and physical exhibits highly compelling.

-

Key benefit: Significant reduction in concept iteration time, better diversity of design options, and improved impact on juries thanks to the combination of AI-driven visuals and high-end physical models.

Scenario 2: Real Estate Developer – Sales Gallery And Marketing

-

Problem: A developer launching a multi-phase mixed-use project needs compelling visuals and physical models for sales galleries and investor roadshows.

-

Traditional approach: Commissioning one-off 3D renders and physical models from different vendors, resulting in style inconsistencies across campaigns and phases.

-

Using diffusion + QZY Models: A diffusion model generates consistent marketing imagery (day/night scenes, interior moods, retail activation concepts) based on core design assets. QZY Models produces matching physical masterplans, tower models, and interior cutaways that reflect the same aesthetic and material language as the AI-generated visuals.

-

Key benefit: A cohesive, instantly recognizable visual identity across all channels, faster content production, and more immersive sales environments that drive buyer confidence.

Scenario 3: Urban Planning Authority – Public Consultation And Policy Communication

-

Problem: A city planning department must communicate complex zoning changes and infrastructure projects to the public and stakeholders, making abstract policies understandable.

-

Traditional approach: Static maps, technical drawings, and limited physical models that are costly to update when plans evolve.

-

Using diffusion + QZY Models: Diffusion models create scenario-based visuals (e.g., streetscape before/after, density variations, new transit-oriented developments) conditioned on GIS and planning data. QZY builds modular physical models of key districts, allowing planners to “swap in” updated pieces as policy scenarios change.

-

Key benefit: Clearer communication, higher public engagement, and more informed feedback through a combination of rich AI visuals and adaptable physical models.

Scenario 4: Industrial Design Company – Product Prototyping And Exhibitions

-

Problem: An industrial design firm needs to present multiple prototype directions for a new product line at a trade show, with tight timelines for both visualization and physical prototypes.

-

Traditional approach: Limited number of prototype forms, hand-modeled renders, and last-minute mock-ups that may not fully convey the product vision.

-

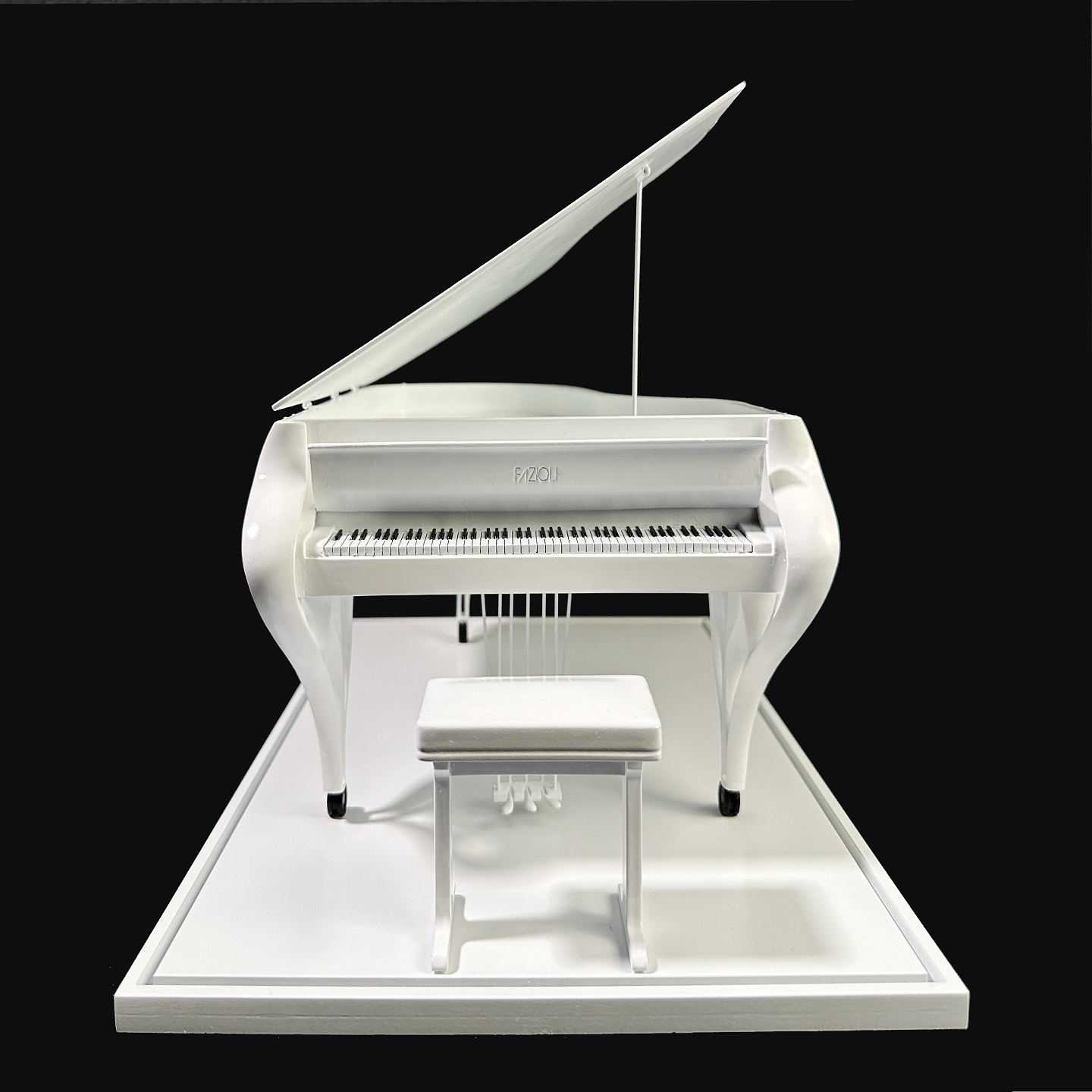

Using diffusion + QZY Models: A diffusion model generates a wide range of stylistic variants and color/material/finish (CMF) combinations for the product family. The design team selects a subset, and QZY Models builds high-precision physical models that match the AI-generated look and feel for display in the exhibition.

-

Key benefit: Expanded exploration of design directions, faster convergence on a compelling product story, and show-ready physical models that match the ambition of the AI concepts.

Why Is Now The Right Time To Adopt Diffusion Models With QZY Models?

The convergence of powerful diffusion models and mature physical modeling expertise creates a unique window of opportunity for architecture, real estate, urban planning, and industrial design organizations. The cost of computation has decreased, pre-trained models are widely available, and internal data archives have grown large enough to support domain-specific fine-tuning. Waiting risks ceding competitive advantage to firms that can present more persuasive visuals and physical experiences faster and at scale.

By partnering with QZY Models, organizations can mitigate two major risks: low-quality AI adoption and poor translation from digital to physical. QZY’s decades of experience in delivering high-quality architectural and industrial models ensures that diffusion-generated ideas are grounded in fabrication reality and presentation excellence. This combination allows teams not only to experiment with AI but to integrate it as a stable, measurable component of their project delivery pipeline.

The future will likely see even tighter integrations: multi-modal diffusion models that jointly reason about geometry, materials, daylight, and human flows; real-time co-design tools that let clients adjust parameters and immediately see both digital and physical implications; and automated feedback loops where performance data continuously improves the generative engine. Investing now sets up firms to capitalize on these advancements rather than scrambling to catch up later.

What Are The Most Common Questions About Diffusion Model Architecture And QZY Models?

-

How is a diffusion model different from a GAN or a traditional renderer?

Diffusion models generate data by iteratively denoising from random noise, while GANs rely on an adversarial generator–discriminator setup and traditional renderers simply visualize existing geometry. Diffusion models are better suited for wide, controllable exploration of new designs rather than only polishing predefined 3D scenes. -

Why does the diffusion architecture often use a U-Net or transformer backbone?

The U-Net structure with skip connections preserves spatial detail while operating at multiple scales, which is ideal for images. Transformer-based backbones improve global context understanding and scalability, useful for large, complex scenes or when integrating multiple conditions such as text, layouts, and semantic maps. -

Can diffusion models be customized for a specific firm’s style or brand?

Yes. By fine-tuning diffusion models on a curated dataset of a firm’s past projects, materials, and brand guidelines, the system learns to reproduce that visual language in new concepts. This allows consistent branding across competition entries, marketing materials, and stakeholder presentations. -

How does QZY Models integrate into a diffusion-based workflow?

QZY Models takes AI-generated visuals and converges them with technical drawings and design intent to produce accurate physical models. The process involves scale selection, material choices, internal structure planning, and finishing techniques that reflect both the AI concept and the project’s real-world constraints. -

Are diffusion models suitable only for images, or can they support more complex assets?

While most widely used for images, diffusion architectures are being extended to 3D, video, and multi-view representations. For architecture and industrial use, even 2D outputs (e.g., façade views or exploded diagrams) are highly useful as they guide both digital refinements and the planning of physical models by teams like QZY Models. -

Can smaller firms benefit from diffusion models and QZY Models without large internal AI teams?

Yes. Smaller firms can leverage off-the-shelf or lightly fine-tuned diffusion models via cloud platforms and then collaborate with QZY Models for high-impact physical models on key projects such as competitions or flagship developments. This offers a high-leverage way to access advanced visualization without building full in-house AI infrastructure.